|

Jupyter at Bryn Mawr College |

|

|

| Public notebooks: /services/public/dblank / CS371 Cognitive Science / 2016-Fall | |||

1. Representing Time in Connectionist Networks¶

This notebook explores how time has been represented in artificial neural networks. There are complex, mathematical models. However, we examine three straightforward methods.

For this exploration, we will use the encoding method from this week's lab.

First, we define some text:

text = ("This is a test. Ok. What comes next? Depends? Yes. " +

"This is also a way of testing prediction. Ok. " +

"This is fine. Need lots of data. Ok?")

And an encoding methodology:

letters = list(set([letter for letter in text]))

def encode(letter):

index = letters.index(letter)

binary = [0] * len(letters)

binary[index] = 1

return binary

patterns = {letter: encode(letter) for letter in letters}

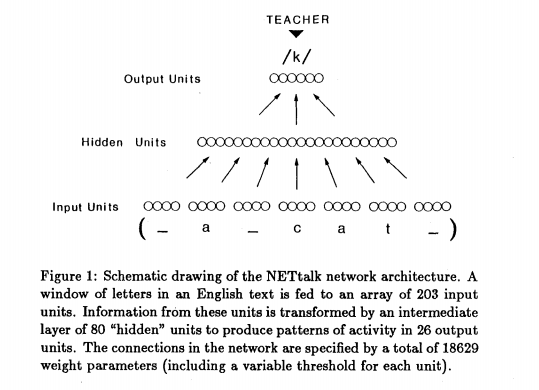

1.1 NETTalk (1987)¶

Consider the problem of reading. That is, given input text, produce a representation of pronouncing that text.

Consider the word "cache". The first "c" is pronounced /k/, but the second "c" is pronounced /sh/. Now consider the made-up word: Tacoche. How would you pronounce it? How do you even go about making a guess?

In 1987, the program NETTalk was created to attempt to solve this problem.

203 / 7

Additional input units are called context or state units.

How is time represented in NETTalk?

from conx import Network

class NETTalk(Network):

def initialize_inputs(self):

pass

def inputs_size(self):

# Return the number of inputs:

return len(text)

def get_letter(self, i):

if 0 <= i < len(text):

return text[i]

else:

return text[0]

def get_inputs(self, i):

inputs = []

for index in range(i - 3, i + 3 + 1):

letter = text[i]

inputs += patterns[letter]

return [inputs, inputs]

pattern_length = len(patterns[" "])

input_length = pattern_length * 7

nettalk = NETTalk(input_length, 80, input_length)

"".join([str(v) for v in nettalk.get_inputs(0)[0]])

nettalk.train(report_rate=10, max_training_epochs=500)

nettalk.get_history()

%matplotlib notebook

import matplotlib.pyplot as plt

plt.plot([item[1] for item in nettalk.get_history()[10:]])

def winning_output(outputs):

"""

Given outputs, what letter is this associated with?

"""

value = max(outputs)

index = list(outputs).index(value)

for key,value in patterns.items():

if value[index] == 1:

return key

return "?"

winning_output([0 for i in range(input_length)])

import random

def select(outputs):

index = 0

partsum = 0.0

sumFitness = sum(outputs)

if sumFitness == 0:

raise Exception("outputs has a sum of zero")

spin = random.random() * sumFitness

while index < len(outputs) - 1:

score = outputs[index]

if score < 0:

raise Exception("Negative score: " + str(score))

partsum += score

if partsum >= spin:

break

index += 1

return index

pattern_length

test = [random.random() for i in range(pattern_length)]

select(test)

def winning_output(outputs):

"""

Given outputs, what letter is this associated with?

"""

index = select(outputs)

for key,value in patterns.items():

if value[index] == 1:

return key

return "?"

winning_output(test)

test2 = [0] * pattern_length

test2[10] = .5

test2[11] = .5

winning_output(test2)

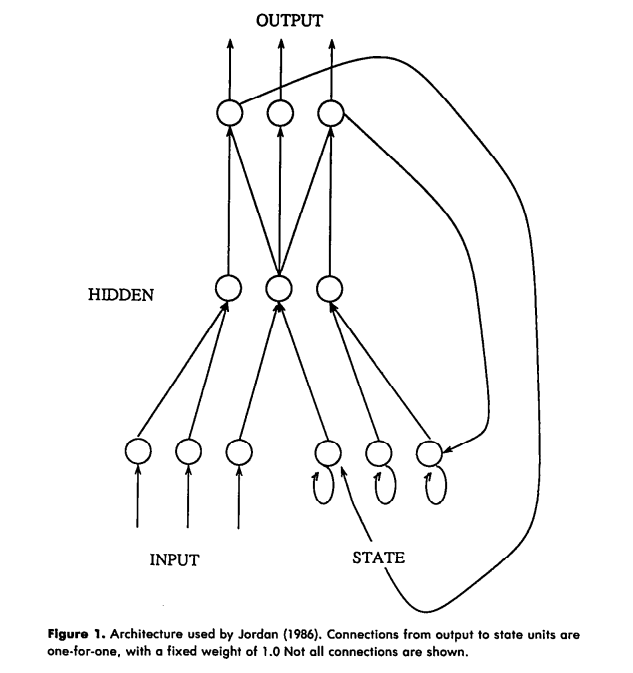

1.2 Jordan Net (1986)¶

from conx import Network

class Jordan(Network):

def initialize_inputs(self):

self.last_output = [0] * pattern_length

self.last_inputs = [0] * (pattern_length * 2)

def inputs_size(self):

# Return the number of inputs:

return len(text)

def get_letter(self, i):

if 0 <= i < len(text):

return text[i]

else:

return text[0]

def get_inputs(self, i):

#import pdb; pdb.set_trace()

inputs = patterns[self.get_letter(i)] + list(self.last_output)

targets = patterns[self.get_letter(i)]

self.last_output = self.propagate(self.last_inputs)

self.last_inputs = inputs

return [inputs, targets]

jordan = Jordan(pattern_length * 2, 10, pattern_length)

jordan.train(report_rate=1, max_training_epochs=10)

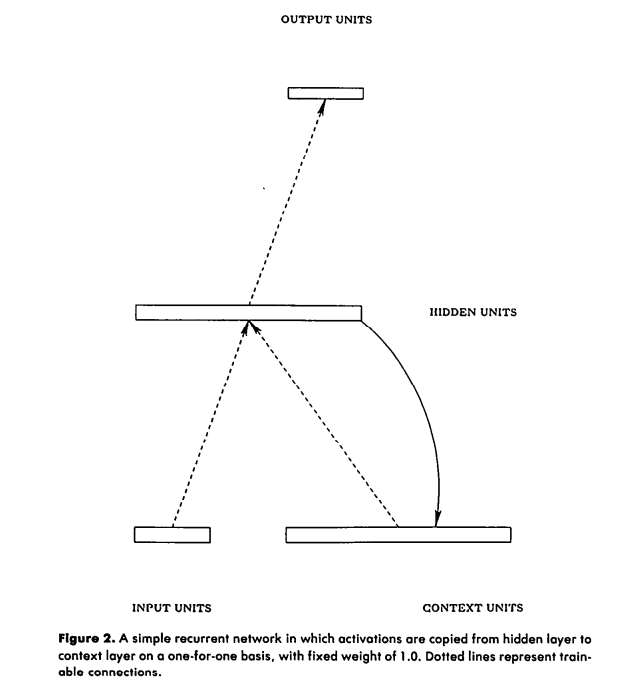

1.3 Simple Recurrent Network (1990)¶

from conx import SRN

class Elman(SRN):

def initialize_inputs(self):

pass

def inputs_size(self):

# Return the number of inputs:

return len(text)

def get_letter(self, i):

if 0 <= i < len(text):

return text[i]

else:

return text[0]

def get_inputs(self, i):

inputs = patterns[self.get_letter(i)]

targets = patterns[self.get_letter(i + 1)]

return [inputs, targets]

elman = Elman(pattern_length, 10, pattern_length)

elman.train(report_rate=1, max_training_epochs=10)